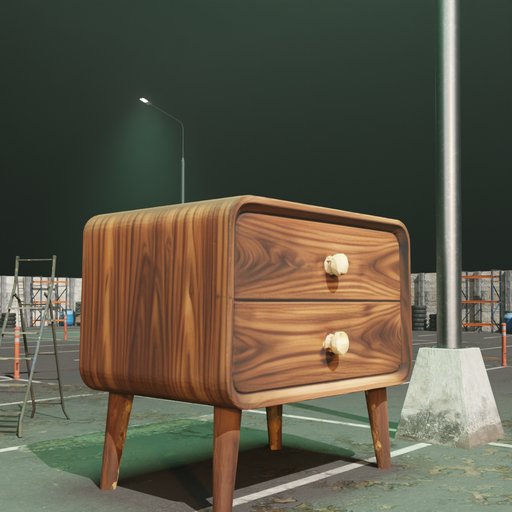

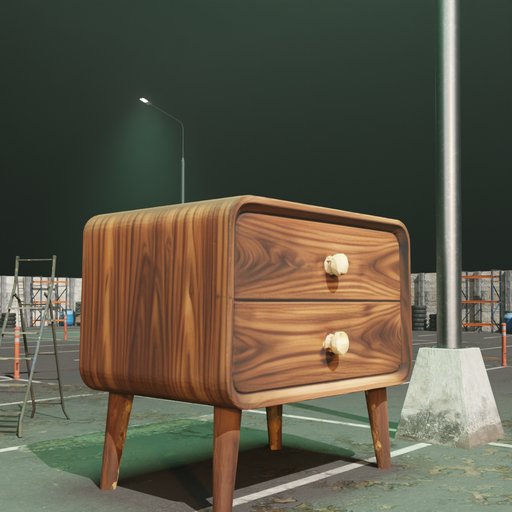

Scene-Aware Rendering Showcase

Best VLM-gated renders across 7 diverse Blender scenes. Each object is automatically placed, lit, and iteratively refined by goal-driven loop agents.

DataEvolver is an autonomous synthetic data construction framework where goal-driven loop agents orchestrate the full pipeline — from text-to-image generation through 3D reconstruction to scene-aware rendering — and iteratively refine output quality via VLM feedback loops.

# Goal-driven loop agent core class EvolutionAgent: def run_loop(self, scene, obj): for round in range(self.max_rounds): render = self.blender_render(scene, obj) review = self.vlm_review(render) if review.verdict == "keep": return render # quality met action = self.agent_decide( review.text, self.action_space ) self.apply_action(scene, action) return render # best effort

Naive automated rendering produces artifacts — flat lighting, color shifts, floating objects. Traditional pipelines rely on rigid scoring rules that lack semantic understanding. What's needed are goal-driven agents that can perceive, diagnose, and act.

Auto-rendered 3D objects often exhibit flat lighting, implausible shadows, and color mismatches with the scene environment.

Rigid numeric thresholds can't diagnose "this lighting feels flat" or "the object appears to float." Goal-driven agents with VLM perception can.

Human artists spend minutes per object adjusting Blender parameters. At 50+ objects with 8+ viewpoints, this becomes infeasible.

From a natural language seed concept to quality-verified rendered pairs — fully automated by goal-driven loop agents, no human intervention required.

The heart of DataEvolver: a goal-driven loop agent that perceives rendered outputs via VLM review, diagnoses semantic issues, selects targeted rendering adjustments from a structured action space, and repeats until quality goals are met.

Sign-flip tracking, dead-zone detection, and step-scale scheduling prevent infinite loops and parameter thrashing.

Objects placed in real Blender scenes with HDRI environments. Raycast ground detection ensures physical plausibility.

Structured action space across lighting, object transform, scene environment, and material property groups.

A benchmark dataset for rotation-conditioned image editing. Each sample pairs a canonical front-view image with a target view specified in natural language.

import json from pathlib import Path from PIL import Image root = Path("dataset_scene_v7_full50_rotation8_...") rows = [] with (root / "pairs/train_pairs.jsonl").open("r") as f: for line in f: rows.append(json.loads(line)) row = rows[0] source = Image.open(root / row["source_image"]).convert("RGB") target = Image.open(root / row["target_image"]).convert("RGB") instruction = row["instruction"]

Best VLM-gated renders across 7 diverse Blender scenes. Each object is automatically placed, lit, and iteratively refined by goal-driven loop agents.

The AI agent selects from a discrete, structured action space to address VLM-identified issues. Each action targets a specific rendering parameter.

×1.2 / ×0.8 multiplicative, bounded [0.5, 2.0]

±15° yaw step, bounded [-90°, 90°]

×1.2 / ×0.8 multiplicative, bounded [0.5, 2.0]

±30° step, bounded [-180°, 180°]

±0.02 step, bounded [-0.1, 0.1]

±0.08 step, bounded [-0.3, 0.6]

+ 18 more actions — see scene_action_space.json

What sets DataEvolver apart from other synthetic data pipelines and why goal-driven loop agents produce better training data.

Free-form natural language feedback provides semantic diagnosis that numeric scores cannot. The reviewer identifies why a render fails.

The agent reads raw review text and reasons about which action to take — no scripted rule engine, no score-threshold mapping.

LoRA fine-tuning on DataEvolver-Rotate improves Qwen Image Edit 2511 on PSNR, SSIM, and LPIPS vs. the base model.

Beyond RGB pairs: mask, depth, normal maps, and geometry metadata — enabling multi-modal conditioning research.

The models, frameworks, and infrastructure powering the DataEvolver pipeline.

DataEvolver runs on a Linux server with GPU access and Blender. Clone the repo and start building self-improving data pipelines.

git clone https://github.com/PRIS-CV/DataEvolver.git cd DataEvolver # Explore the pipeline ls pipeline/ # 6-stage data synthesis ls configs/ # Action space, scene templates ls scripts/ # Agent monitor, dataset builders # Read the full documentation cat CLAUDE.md # Comprehensive project guide

If you use DataEvolver or DataEvolver-Rotate in your research, please cite our work.

@misc{zhang2026dataevolverletdatabuild,

title = {DataEvolver: Let Your Data Build and Improve

Itself via Goal-Driven Loop Agents},

author = {Qisong Zhang and Wenzhuo Wu and Zhuangzhuang Jia

and Yunhao Yang and Huayu Zhang and Xianghao Zang

and Zhixiang He and Zhongjiang He and Kongming Liang

and Zhanyu Ma},

year = {2026},

eprint = {2605.01789},

archivePrefix= {arXiv},

primaryClass = {cs.AI},

url = {https://arxiv.org/abs/2605.01789}

}

Clone the repository and start running goal-driven loop agents on your own scenes and objects.

Get Started on GitHub